|

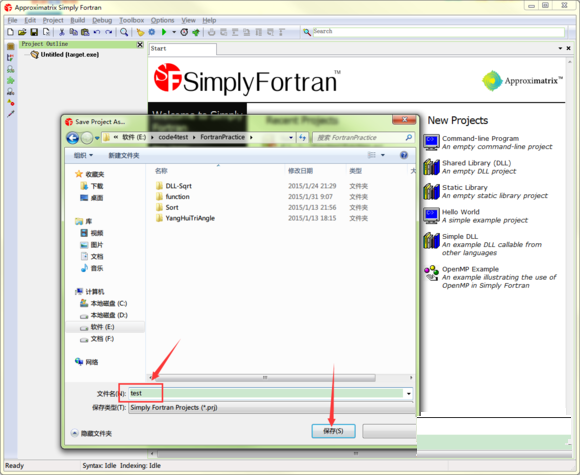

2008 Releases a 'Roll' for Clustercorp's Rocks Cluster Distribution that includes Absoft Pro Fortran 10.1 and is compatible with Rocks+ 4.3 and its open-source software stack.2007 Absoft releases Pro Fortran 10.1 with tuning for multi-core AMD and Xeon processors for both 32-bit and 64-bit executables.2007 64-bit executables on Microsoft Windows and Mac OS/X.2006 AnCAD MATFOR libraries for Linux and Windows released.2006 IMSL 5.0 for 64-bit Intel/AMD Linux released.2006 Max OS/X Intel Pro Fortran released.The profiler and bundled C/C++ compiler was dropped to allow compatibility with system C compilers and linkers. 2005 With version 10.0, the previously bundled Absoft C/C++ compiler was dropped in favor of using universally available C/C++ compilers on each platform directly from the IDE.2005 64-bit executables on the Macintosh.2004 IBM contract to develop the HPC SDK for POWER, POWER4 and POWER5 architectures.2004 Release of IBM XL Fortran and XL C/C++ for Mac OS (PPC).2003 First compiler that produces 64-bit executables (Linux).1997 Release of Linux Fortran as produced for CERN to port ESPACE code to Linux.1994 Release of Fortran for Microsoft Windows.1994 Release of Absoft Fortran for Mac PPC (still available!).

0 Comments

04N - Evidence of roaches or live roaches present in facility's food and/or non-food areas.04M - Evidence of mice or live mice present in facility's food and/or non-food areas.04K - Appropriately scaled metal stem-type thermometer not provided or used to evaluate temperatures of potentially hazardous foods during cooking, cooling, reheating and holding.04H - Food in contact with utensil, container, or pipe that consist of toxic material.04C - Food worker does not use proper utensil to eliminate bare hand contact with food that will not receive adequate additional heat treatment.

04A - Food Protection Certificate not held by supervisor of food operations.02G - Cold food held above 41F (smoked fish above 38F) except during necessary preparation.02B - Hot food item not held at or above 140F.08A (General Violations Conditions - Vermin/Garbage).06A (Critical Violations - Personal Hygiene & Other Food Protection).04M (Critical Violations - Food Protection).04K (Critical Violations - Food Protection).10F (General Violations Conditions - Facility Maintenance).04H (Critical Violations - Food Protection).04C (Critical Violations - Food Protection).04A (Critical Violations - Food Protection).02B (Critical Violations - Food Temperature).10B (General Violations Conditions - Facility Maintenance).

06E (Critical Violations - Personal Hygiene & Other Food Protection).05D (Critical Violations - Facility Design).

The plugin also stores the full-text extract version of the different file types as an element within the json-type document. The “Ingest Attachment” plugin uses the Apache Tika library to extract data for different file types and then store the clear text contents in Elasticsearch as json-type documents. Apache Tika is an open source toolkit that detects and extracts metadata and text from many different file types (like PDF, DOC, XLS, PPT etc.).

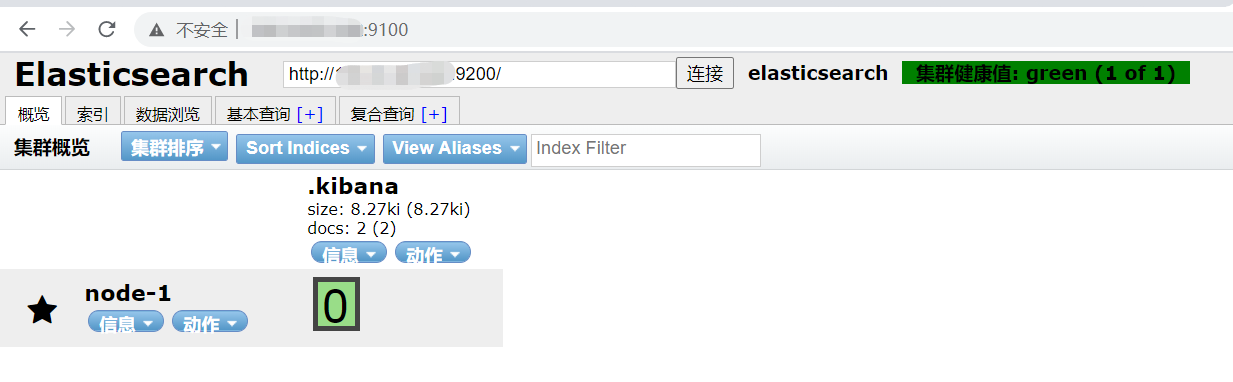

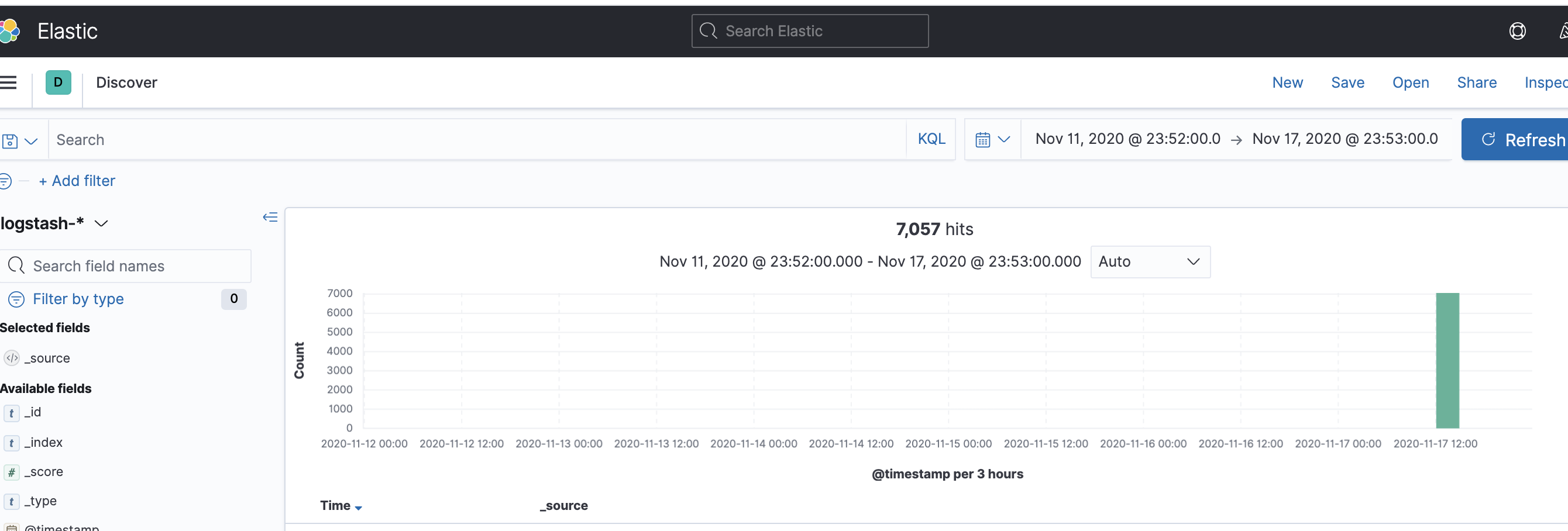

The “Ingest Attachment” plugin is based on open source Apache Tika project. Users use the web application user interface to search for documents (like PDF, XLS, DOC etc.) that are ingested into the Elasticsearch cluster (via “workflow 1”).Įlasticsearch provides “Ingest Attachment” plugin to ingest documents into the cluster. Depending on the choice of programming language and application framework, you can pick the appropriate API l to interact with the Elasticsearch cluster. The web application can be written in Java (JEE), Python (Django), Ruby on Rails, PHP etc. The web application in turn behind the scene uses the Elasticsearch API to send the files to Ingest node of Elasticsearch cluster. Users upload the files using the Web application user interface. The above diagram shows two user driven workflows in which Elasticsearch cluster is used by web applications. A node is a physical server or a virtual machine (VM) The diagram below shows how data is logically distributed across an Elasticsearch cluster comprising of three nodes. You can replicate shards across multiple replicas to provide fail-over and high availability. Shards allow parallel data processing for the same index. A document belongs to one index and one primary shard within that index. Elasticsearch under the hood uses Apache Lucene as the search engine for searching documents it stores in its data store.ĭata (more specifically documents) in Elasticsearch are stored in indexes which are stored in multiple shards.

DynamoDB, MongoDB, DocumentDB and many other NoSQL data stores provide similar capabilities. This benefit comes due to the Schema less nature of Elasticsearch data store and is not unique to Elasticsearch. Since the data is stored in “json” format, it allows a schema less layout thereby deviating from the traditional RDBMS-type of schema layouts and allowing flexibility for the json-elements to be changed with no impact on existing data. The “term” document in Elasticsearch nomenclature means “json”- type data. Filebeat can be used as a light weight log shipper and one of the source of data coming over to Logstash which can then act as an aggregator and perform further analysis and transformation on the data before its stored in Elasticsearch as shown belowĮlasticsearch is the NoSQL data store for storing documents. Logstash requires Java JVM and is a heavy weight alternative to Filebeat which requires minimal configuration in order to collect log data from client nodes. Typically you can consider Logstash as the “big daddy” for Filebeat. The question comes when to use Logstash versus Fliebeat. It has inbuilt filters and scripting capabilities to perform analysis and transformation of data from various log sources (Filebeat being one such source) before sending information to Elasticsearch for storage. Logstash is the log analysis platform for ELK+ stack. Filebeat provides many out of the box modules to process data and these modules can be configured with minimal configuration to start shipping logs to Elasticsearch and/or Logstash. # Authentication credentials - either API key or username/password.In the ELK+ stack, Filebeat provides a lightweight alternative to send log data to either the Elasticsearch directly or to Logstash for further transformation of data before its send to Elasticsearch for storage. # Protocol - either `http` (default) or `https`. Here is my Filebeat configuration : output.elasticsearch: I know I'm doing something wrong but I don't find the answer for Filebeat over HTTPS. Everything works fine in HTTP but when I switch to HTTPS and reload Filebeat I get the following message: Error.

I would like to send my nginx logs which is located on another server ( over internet, so I do not want to send logs in clear text). However, I don't understand how to enable Filebeat over HTTPS. I am totally newbie in elk but I'm currently deploying ELK stack via docker-compose ( TLS part).Įlasticsearch and Kibana work correctly in HTTPS. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed